Focused deep dive

Ask Synth + Flow: turning retail AI agents into daily operator workflows.

A focused product-agent case study on Ask Synth, Flow, Playbook, LangGraph-style orchestration, tool-calling guardrails, output cleanup, and operator-facing decision support.

Role

Fullstack AI/ML Engineer

Status

Evidence-backed deep dive

Evidence visual

Evidence visualHeySynth

Flagship product evidence, shown inline so the reader stays inside the case study.

10 proof assets

3

Operator surfaces: Ask Synth, Flow, Playbook

2

System concerns: orchestration reliability and output trust

1

Product lens: agentic AI that supports daily operations

Technical Scope

Stack

Story Snapshot

The short version before the deep dive.

Situation

Retail teams needed AI assistance that could summarize risk, explain impact, and fit into existing execution routines.

Problem

Generic chat UX was not enough. Agents needed to avoid loop behavior, suppress internal tool noise, and return grounded outputs teams could act on.

Direction

I focused on orchestration safety and productized UX: Ask Synth for reports, Flow for signal triage, and Playbook for repeatable agent workflows.

Case Study Narrative

Problem, solution, and the thinking behind the system.

A product story anchored in the screens, workflows, and implementation evidence behind the build.

01 / Situation

Agents had to support operator decisions, not just produce fluent responses.

HeySynth operators asked practical questions about stock risk, impacted SKUs, and revenue exposure. They needed answers they could review and act on quickly.

A chat-only assistant was not enough. The product needed structured output, context continuity, and clear follow-up paths in the same workflow.

That made this an agentic product problem where orchestration quality and interface quality had equal weight.

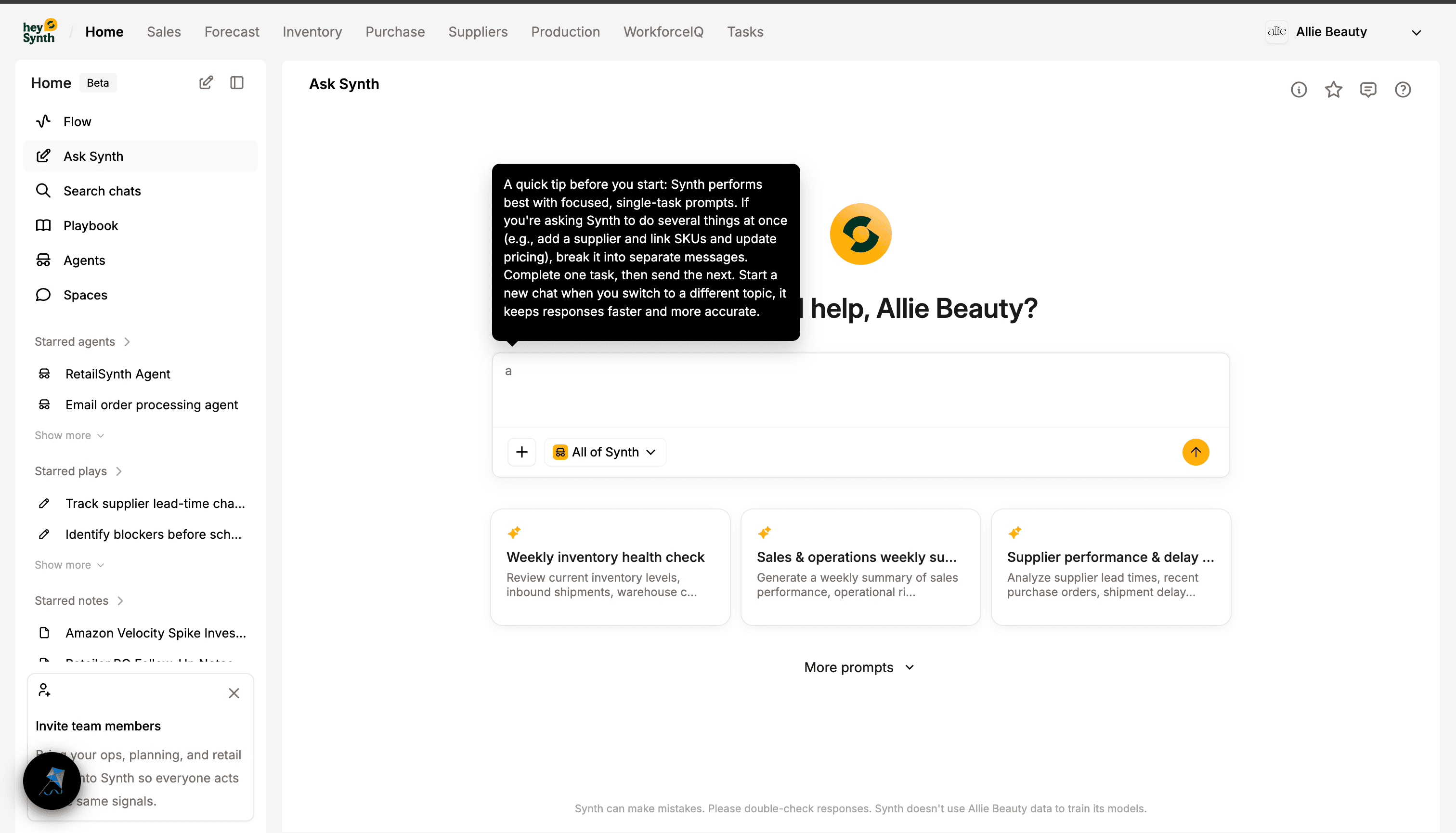

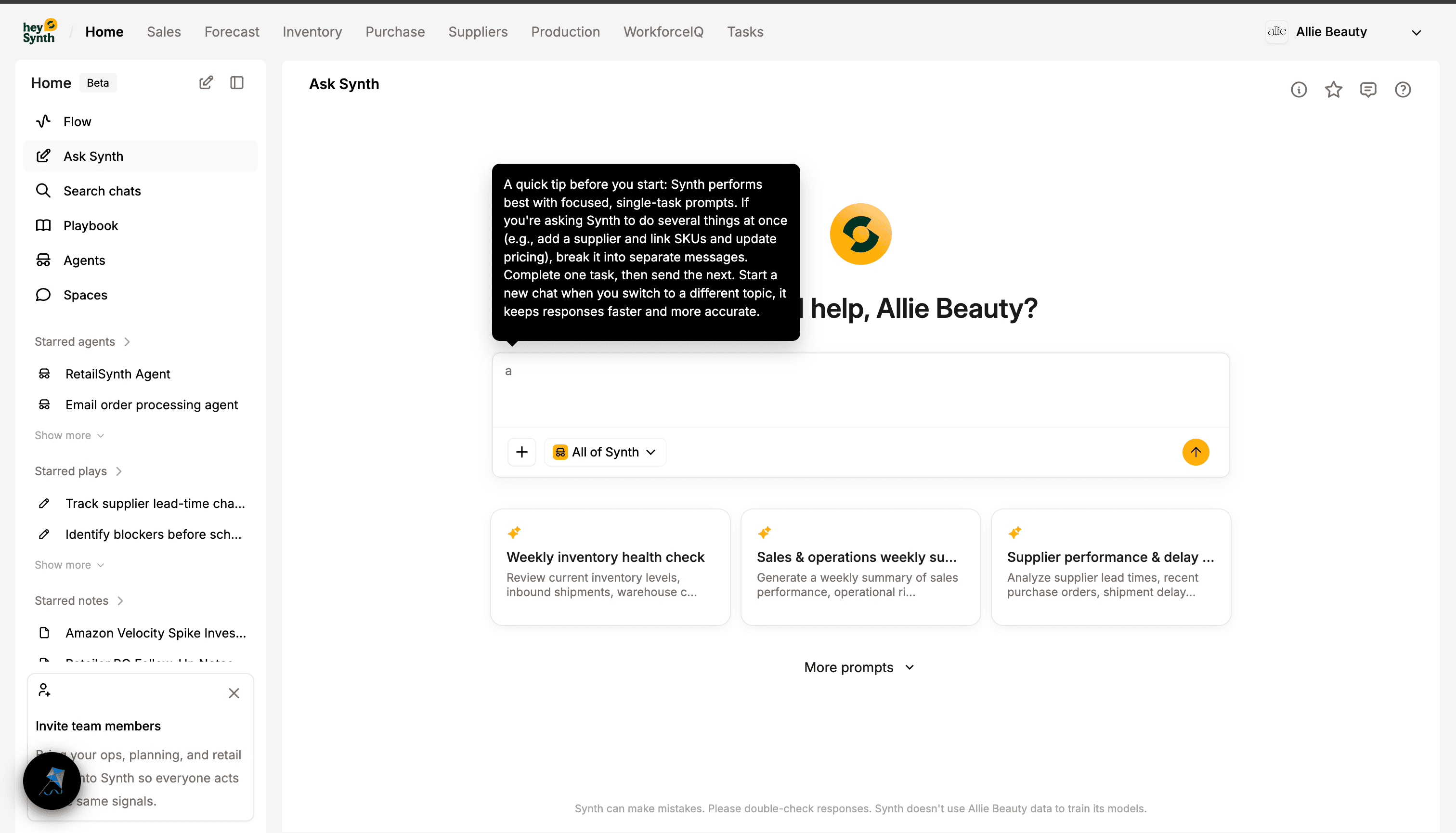

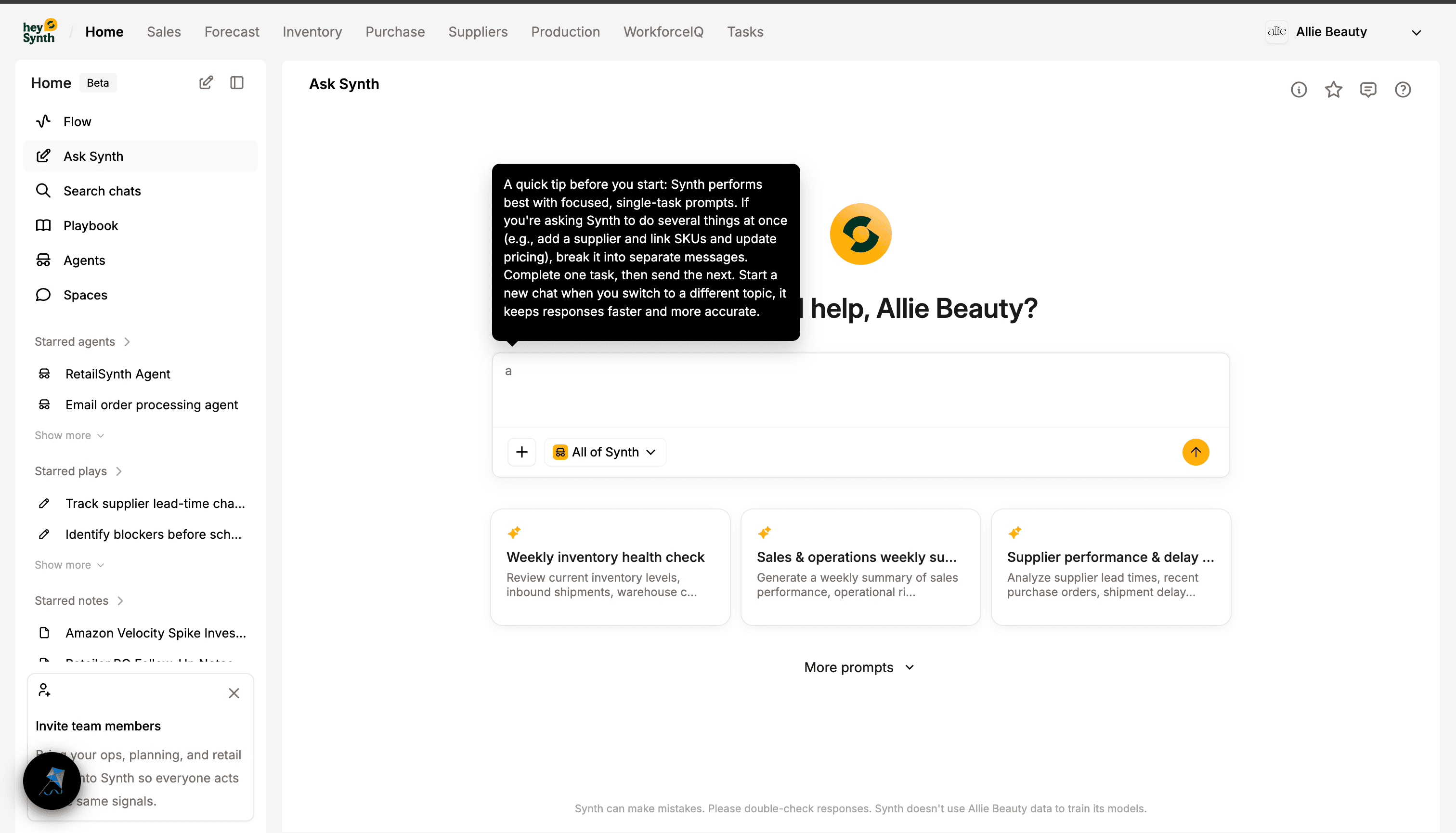

02 / Ask Synth

Conversational UX was grounded around retail reporting tasks.

Ask Synth was shaped to return operationally useful responses instead of generic chat prose.

I worked across frontend and agent-touching behavior so prompts, follow-ups, and generated reports stayed readable and business-grounded.

This reduced the gap between asking a question and receiving a decision-ready artifact.

Image proof

Image proofAsk Synth: grounded prompt and response flow

Operator-facing surface for generating retail-specific reports from natural-language prompts.

Image proof

Image proofAsk Synth report workflow variant

Additional evidence of report-style output and follow-up workflow state.

03 / Flow

Flow translated distributed AI signals into a daily operating cockpit.

Flow grouped signals by urgency so teams could start from what changed overnight instead of scanning disconnected dashboards.

I treated it as workflow infrastructure: clear severity framing, status context, and clean handoff from signal to action.

The outcome was practical trust because users could see why an issue mattered and decide quickly.

Image proof

Image proofFlow: priority-based operations surface

Signal triage interface for critical, aware, and healthy operational states.

Image proof

Image proofFlow operations board variant

Supporting signal board view showing workflow prioritization across alert states.

04 / Playbook

Repeated successful agent tasks became reusable workflows.

Once teams found high-value prompts, Playbook let them reuse runs instead of rebuilding the same interaction every time.

This shifted agents from ad hoc usage to repeatable routines with visible run history.

It improved adoption by moving value from one-off chat moments to team-level workflows.

Image proof

Image proofPlaybook: reusable AI operations

Reusable run surfaces that turn recurring AI tasks into operational workflows.

Image proof

Image proofPlaybook run detail

Run-level workflow detail showing reusable agent execution states and outputs.

05 / Production Evidence

The current proof set already shows a live agent workflow stack.

Ask Synth screenshots show grounded prompts and report-style outputs tied to real retail decisions.

Flow and Playbook surfaces show how agent outputs become triage inputs and reusable workflow assets.

Together, these screens document a live agent workflow stack built for daily operations.

The agent story is backed by real UI and workflow evidence.

The case study now reads as shipped product engineering, not future intent.

Proof Layer

The work spanned multiple connected systems, not one isolated feature.

This is the proof layer: each stream maps to implementation history while keeping private repository and customer details out of the public page.

Ask Synth report experience

Agent UX + response qualityProblem

Operators needed answers they could act on, but generic assistant responses were often too vague or disconnected from workflow context.

What I Built

I worked on the Ask Synth interaction and rendering flow to support grounded prompts, cleaner generated reports, and better follow-up behavior for planning tasks.

Visible proof in Ask Synth screens that show structured out-of-stock and revenue-impact report output.

Implementation proof

Implementation proofAsk Synth generated operational report

Business-grounded response format that supports immediate planning decisions.

Flow signal orchestration surface

Operational decision UXProblem

Important AI-derived signals were easy to miss without a prioritized view tied to business impact.

What I Built

I helped shape Flow as a triage cockpit where signals are grouped by urgency and presented with enough context for quick action.

Evidence is visible in the Flow interface where risk and priority are surfaced as operational categories.

Implementation proof

Implementation proofFlow operations cockpit

Priority-first signal layer that connects AI outputs to daily execution.

Playbook workflow productization

Reusable agent workflowsProblem

High-value prompts were repeated manually, causing inconsistency and weak team-level reuse.

What I Built

I contributed to Playbook surfaces and workflow behavior that turn recurring agent tasks into repeatable runs with visible status and output history.

Proof is present in Playbook screens that show active plays, run tracking, and reusable operational patterns.

Implementation proof

Implementation proofPlaybook run management

Workflow surface for managing recurring agent runs and outputs.

Why It Matters

This was not a single feature. It was production AI ownership across the stack.

Evidence

Agentic product lens is distinct from forecasting: orchestration trust, output quality, and workflow adoption.

Real screenshots already validate Ask Synth, Flow, and Playbook as connected operator surfaces.

Demonstrates fullstack AI ownership where backend/agent behavior and frontend product UX are co-designed.

What this says about me

Strong fit for agentic AI product teams shipping operator-facing decision systems.

Shows ability to bridge orchestration internals with polished end-user workflows.

Demonstrates production-minded approach to AI trust, not just model integration.

Evidence Library

A closer look at the product surfaces.

Curated proof from the workflows behind the story: the operating cockpit, Ask Synth, Playbook, forecasting, and forecast upload surfaces.

Decisions

Trade-offs I owned.

Prioritize grounded report-style outputs over generic conversational flair.

Harden orchestration behavior around loops, tool-noise suppression, and clean output rendering.

Turn repeated agent tasks into reusable playbooks so AI workflows become operational assets.